I Used The Web For A Day On Internet Explorer 8

I Used The Web For A Day On Internet Explorer 8

Chris Ashton2019-03-19T12:00:08+01:002019-03-19T11:11:07+00:00

This article is part of a series in which I attempt to use the web under various constraints, representing a given demographic of user. I hope to raise the profile of difficulties faced by real people, which are avoidable if we design and develop in a way that is sympathetic to their needs.

Last time, I navigated the web for a day using a screen reader. This time, I spent the day using Internet Explorer 8, which was released ten years ago today, on March 19th, 2009.

Who In The World Uses IE8?

Before we start; a disclaimer: I am not about to tell you that you need to start supporting IE8.

There’s every reason to not support IE8. Microsoft officially stopped supporting IE8, IE9 and IE10 over three years ago, and the Microsoft executives are even telling you to stop using Internet Explorer 11.

But as much as we developers hope for it to go away, it just. Won’t. Die. IE8 continues to show up in browser stats, especially outside of the bubble of the Western world.

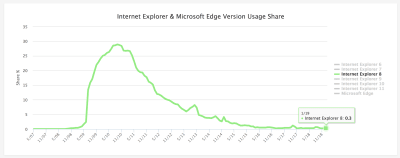

Browser stats have to be taken with a pinch of salt, but current estimates for IE8 usage worldwide are around 0.3% to 0.4% of the desktop market share. The lower end of the estimate comes from w3counter:

The higher estimate comes from StatCounter (the same data feed used by the “Can I use” usage table). It estimates global IE8 desktop browser proportion to be around 0.37%.

I suspected we might see higher IE8 usage in certain geographical regions, so drilled into the data by continent.

IE8 Usage By Region

Here is the per-continent IE8 desktop proportion (data from February 2018 — January 2019):

| 1. | Oceania | 0.09% |

| 2. | Europe | 0.25% |

| 3. | South America | 0.30% |

| 4. | North America | 0.35% |

| 5. | Africa | 0.48% |

| 6. | Asia | 0.50% |

Someone in Asia is five times more likely to be using IE8 than someone in Oceania.

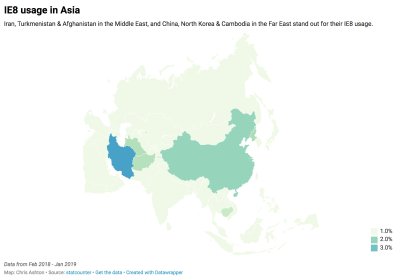

I looked more closely into the Asian stats, noting the proportion of IE8 usage for each country. There’s a very clear top six countries for IE8 usage, after which the figures drop down to be comparable with the world average:

| 1. | Iran | 3.99% |

| 2. | China | 1.99% |

| 3. | North Korea | 1.38% |

| 4. | Turkmenistan | 1.31% |

| 5. | Afghanistan | 1.27% |

| 6. | Cambodia | 1.05% |

| 7. | Yemen | 0.63% |

| 8. | Taiwan | 0.62% |

| 9. | Pakistan | 0.57% |

| 10. | Bangladesh | 0.54% |

This data is summarized in the map below:

Incredibly, IE8 makes up around 4% of desktop users in Iran — forty times the proportion of IE8 users in Oceania.

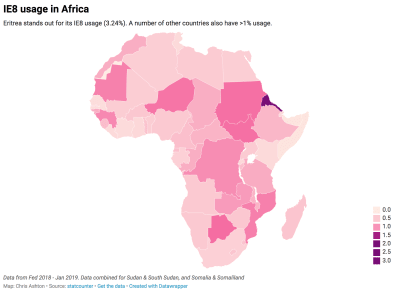

Next, I looked at the country stats for Africa, as it had around the same overall IE8 usage as Asia. There was a clear winner (Eritrea), followed by a number of countries above or around the 1% usage mark:

| 1. | Eritrea | 3.24% |

| 2. | Botswana | 1.37% |

| 3. | Sudan & South Sudan | 1.33% |

| 4. | Niger | 1.29% |

| 5. | Mozambique | 1.19% |

| 6. | Mauritania | 1.18% |

| 7. | Guinea | 1.12% |

| 8. | Democratic Republic of the Congo | 1.07% |

| 9. | Zambia | 0.94% |

This is summarized in the map below:

Whereas the countries in Asia that have higher-than-normal IE8 usage are roughly batched together geographically, there doesn’t appear to be a pattern in Africa. The only pattern I can see — unless it’s a coincidence — is that a number of the world’s largest IE8 using countries famously censor internet access, and therefore probably don’t encourage or allow updating to more secure browsers.

If your site is aimed at a purely Western audience, you’re unlikely to care much about IE8. If, however, you have a burgeoning Asian or African market — and particularly if you care about users in China, Iran or Eritrea — you might very well care about your website’s IE8 experience. Yes — even in 2019!

Who’s Still Using IE?

So, who are these people? Do they really walk among us?!

Whoever they are, you can bet they’re not using an old browser just to annoy you. Nobody deliberately chooses a worse browsing experience.

Someone might be using an old browser due to the following reasons:

- Lack of awareness

They simply aren’t aware that they’re using outdated technology. - Lack of education

They don’t know the upgrade options and alternative browsers open to them. - Lack of planning

Dismissing upgrade prompts because they’re busy, but not having the foresight to upgrade during quieter periods. - Aversion to change

The last time they upgraded their software, they had to learn a new UI. “If it ain’t broke, don’t fix it.” - Aversion to risk

The last time they upgraded, their machine slowed to a crawl, or they lost their favorite feature. - Software limitation

Their OS is too old to let them upgrade, or their admin privileges may be locked down. - Hardware limitation

Newer browsers are generally more demanding of your hard disk space, memory and CPU. - Network limitation

A capped data allowance or slow connection mean they don’t want to download 75MB of software. - Legal limitation

They might be on a corporate machine that only condones the use of one specific browser.

Is it really such a surprise that there are still people around the world who are clinging to IE8?

I decided to put myself in the shoes of one of these anonymous souls, and browse the web for a day using IE8. You can play along at home! Download an “IE8 on Windows 7” Virtual Machine from the Microsoft website, then run it in a virtualizer like VirtualBox.

IE8 VM: Off To A Bad Start

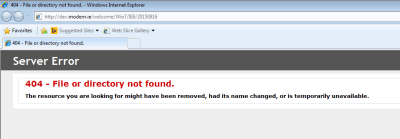

I booted up my IE8 VM, clicked on the Internet Explorer program in anticipation, and this is what I saw:

Hmm, okay. Looks like the default web page pulled up by IE8 no longer exists. Well, that figures. Microsoft has officially stopped supporting IE8 so why should it make sure the IE8 landing page still works?

I decided to switch to the most widely used site in the world.

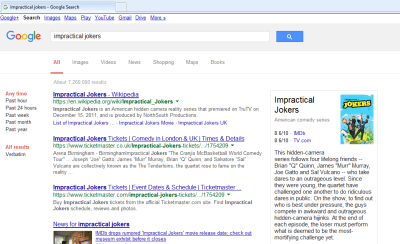

It’s a simple site, therefore difficult to get wrong — but to be fair, it’s looking great! I tried searching for something:

The search worked fine, though the layout looks a bit different to what I’m used to. Then I remembered — I’d seen the same search result layout when I used the Internet for a day with JavaScript turned off.

For reference, here is how the search results look in a modern browser with JavaScript enabled:

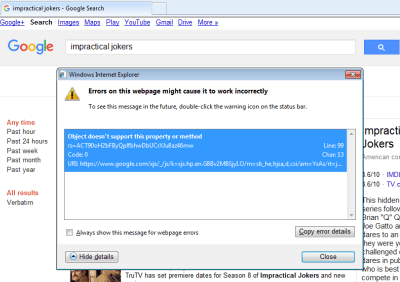

So, it looks like IE8 gets the no-JS version of Google search. I don’t think this was necessarily a deliberate design decision — it could just be that the JavaScript errored out:

Still, the end result is fine by me — I got my search results, which is all I wanted.

I clicked through to watch a YouTube video.

YouTube

There’s quite a lot broken about this page. All to do with little quirks in IE.

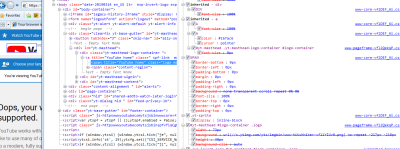

The logo, for instance, is zoomed in and cropped. This is down to IE8 not supporting SVG, and what we’re actually seeing is the fallback option provided by YouTube. They’ve applied a background-image CSS property so that in the event of no SVG support, you’ll get an attempt at displaying the logo. Only they seem to have not set the background-size properly, so it’s a little too far zoomed in.

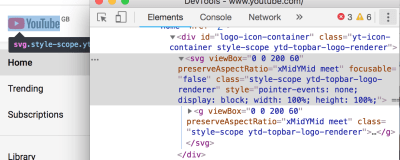

background-img on the logo span, which pulls in a sprite. (Large preview)For reference, here is the same page in Chrome (see how Chrome renders an SVG instead):

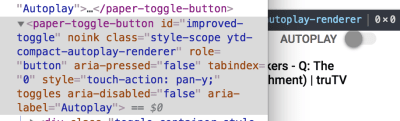

And what about that Autoplay toggle? It’s rendered like a weird looking checkbox:

This appears to be down to use of a custom element (a paper-toggle-button, which is a Material Design element), which IE doesn’t understand:

paper-toggle-button is a custom element. (The screenshot is from Chrome DevTools, alongside how the Autoplay toggle SHOULD render.) (Large preview)I’m not surprised this hasn’t rendered properly; IE8 doesn’t even cope with the basic semantic markup we use these days. Try using an